Medical Device Software Bill of Materials

Cyber attacks on medical devices are increasing

Cyber-attacks against healthcare organizations increased by 74% last year, and attacks on the software supply chain have increased an average of 610% per year since 2020. These attacks can take various forms that attack the functionality and reliability of medical devices and the software that runs on these devices. This in turn, unfortunately, can lead to mistrust of the device and its manufacturer and ongoing brand damage.

Most medical devices have software running on them and often connect to software on computers, smartphones or mobile apps to share and exchange data. Having multiple software components in these different places adds security challenges that many medical device suppliers aren’t properly prepared to address. This is one of the reasons that the US Department of Health and Human Services (HHS) and the Food and Drug Administration (FDA) have partnered to create new regulations for medical device suppliers.

Traditionally, manufacturers of medical devices have not had visibility into what went into the software and firmware running on their devices. This has led many organizations to try and find better ways for them, and their users, to find confidence in the security of medical equipment.

Government creates rules to address attacks

According to new government requirements, all medical device suppliers must submit a plan to monitor, identify, and address cybersecurity issues. They must also create a process that provides reasonable assurance that their device is protected. Additionally, applicants must make security updates and patches available on a regular schedule and in critical situations. Finally, they must provide the FDA with a software bill of materials, including any open-source or other software components their devices use.

SBOMs can help address software supply chain risks in medical devices

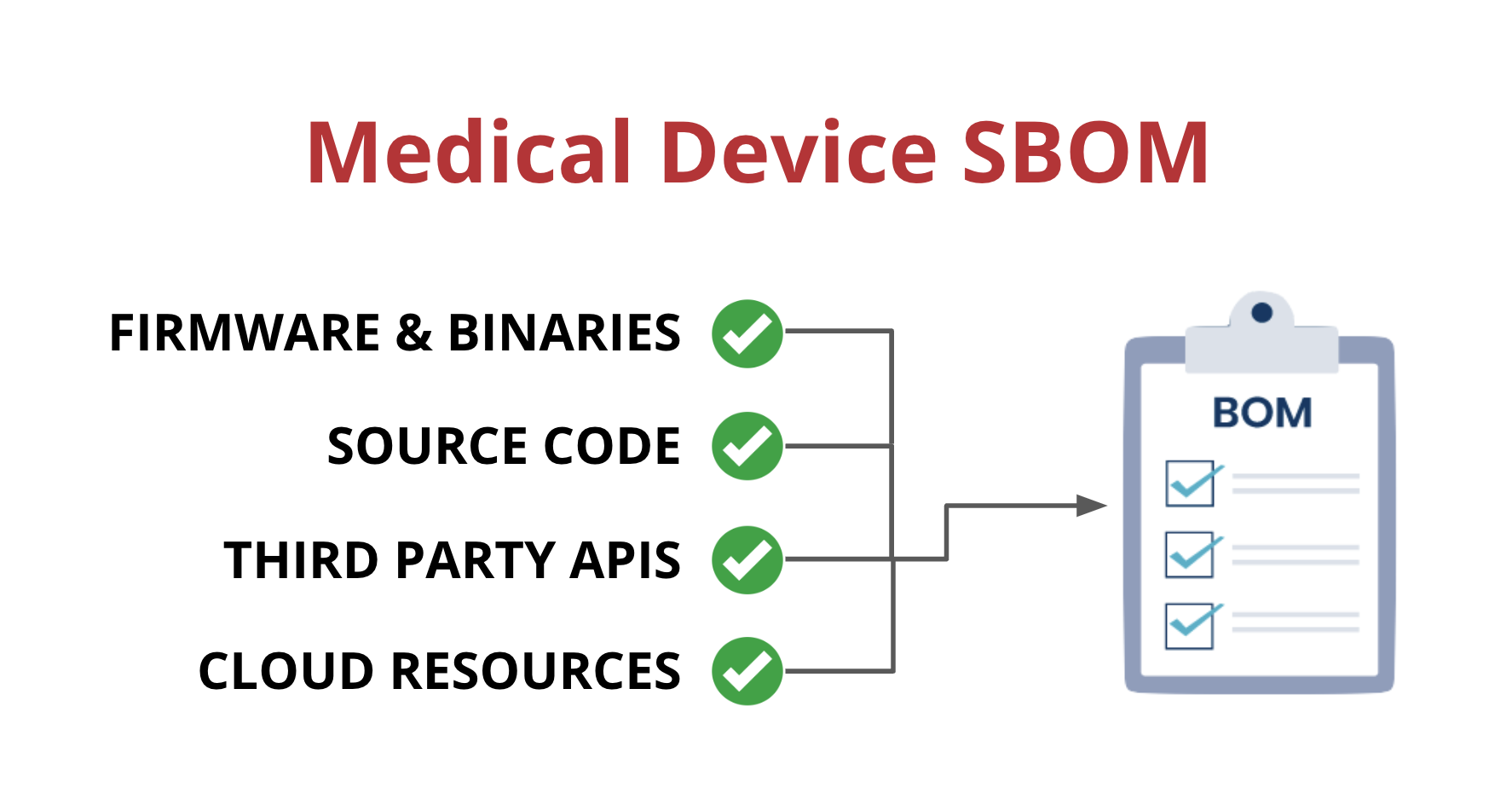

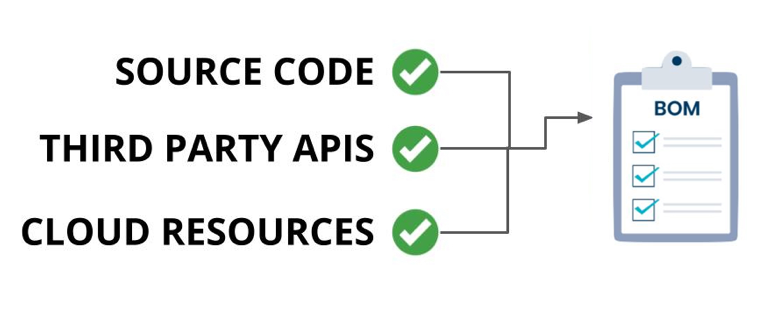

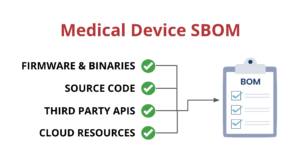

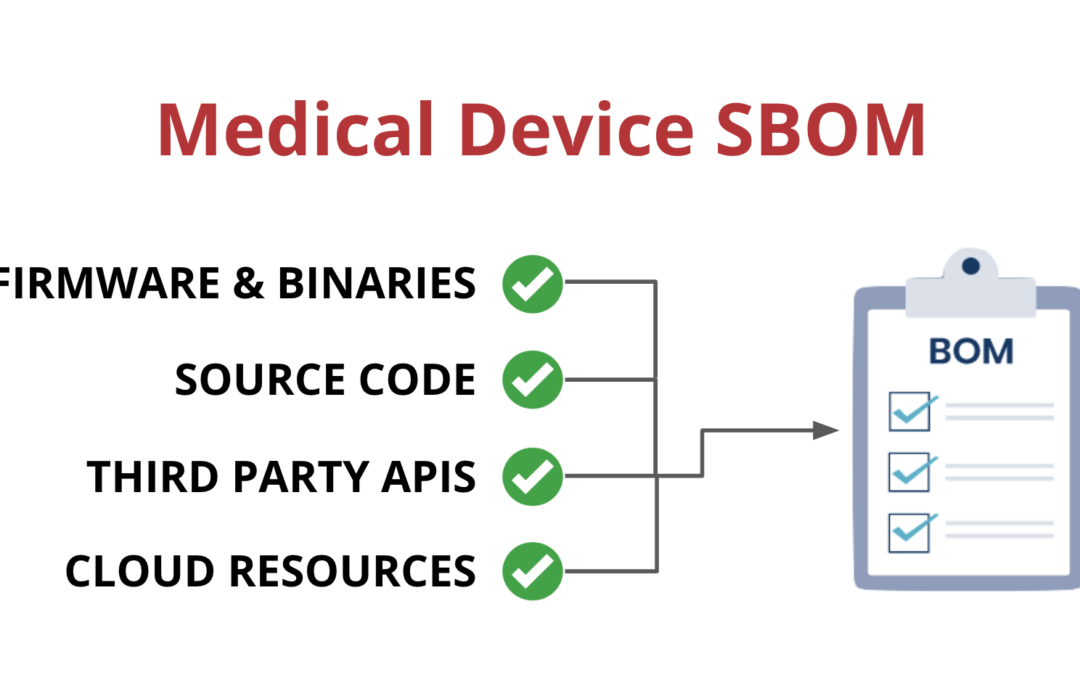

One way that medical device manufacturers can improve visibility is by implementing a Software Bill of Materials, also known as an SBOM. An SBOM is a comprehensive list of all the software components running on a medical device. This includes:

- Open-source and third-party software components

- Firmware and binaries

- Cloud resources

- APIs the device interacts with, or sends data to

The implementation of SBOMs is especially crucial for the medical device industry. Due to the potential risks of medical device software vulnerabilities, regulatory bodies such as the FDA, have made SBOMs mandatory for medical device manufacturers.

With an SBOM in place, manufacturers can identify vulnerabilities and take action to address them before they can be exploited by attackers. This can help reduce the risk of harm to patients and ensure that medical devices are safe and reliable.

Government and industry requirements for SBOM

- US Federal Government: On December 29, 2022, the White House signed H.R. 2617, the Consolidated Appropriations Act 2023, which included several cybersecurity provisions. This bill introduces new requirements for medical device manufacturers to ensure that their devices meet certain minimum cybersecurity standards. These requirements will take effect 90 days after the bill is enacted and include:

- This involves monitoring, identifying, and addressing cybersecurity vulnerabilities and exploits that arise after a product has been released to the market. This includes coordinated vulnerability disclosure, as well as requiring the release of software and firmware updates and patches to address these vulnerabilities.

- It also requires medical device manufacturers to provide a Software Bill of Materials (SBOM) to the FDA Secretary, which includes all off-the-shelf, open-source, and critical components used by the devices.

- FDA: The Food and Drug Administration (FDA) has published the “Cybersecurity in Medical Devices” guidance document. You can find all of the FDA’s guidance on cybersecurity here.

- IMDRF: The International Medical Device Regulators Forum has published two documents about SBOM. The first is the “Principles and Practices for Medical Device Cybersecurity” and the second is the “Principles and Practices for Software Bill of Materials for Medical Device Cybersecurity“.

- US Department of Health and Human Services: HHS has published the “Health Industry Cybersecurity Practices: Managing Threats and Protecting Patients (HICP)” document.

- Australian Department of Health and Aged Care: The Australian TGA has released the “Medical Device Cyber Security Guidance for Industry” document.

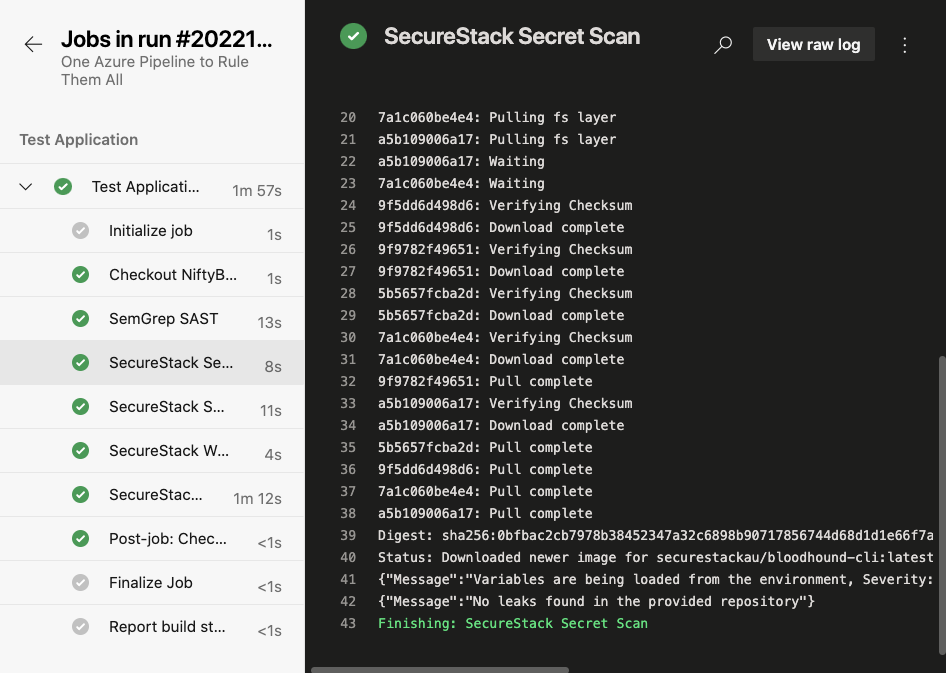

How do medical device suppliers get started with SBOM?

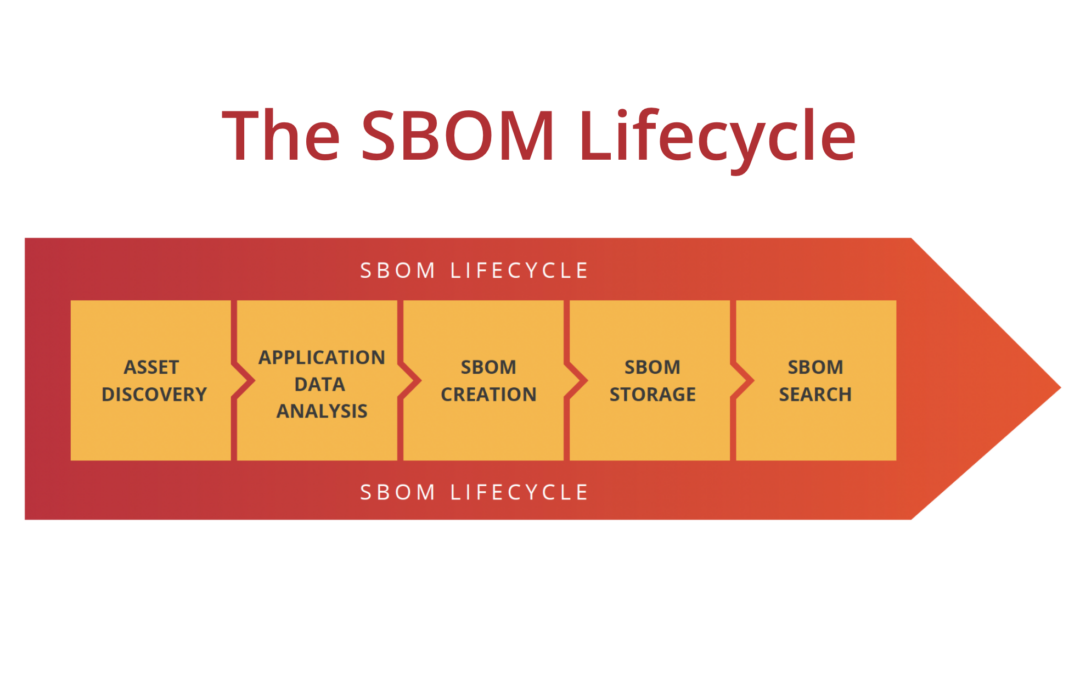

All federal agencies mentioned above believe that SBOMs should be automatically created during the software development process. Moreover, these SBOMs should be as descriptive of the actual application running on, or connected to, the medical device it supports. To do this, organizations need to have a process that identifies the different steps involved in software development, creates the SBOM and then makes them available for the business to gain value from. We believe the best way to do that is to create a SBOM lifecycle policy.

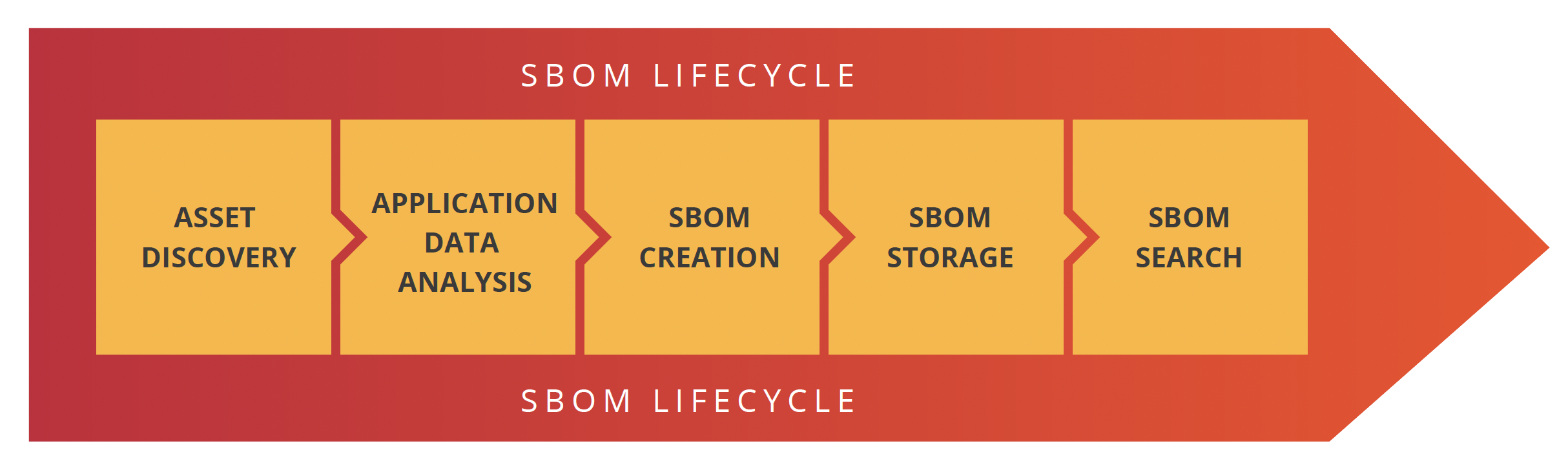

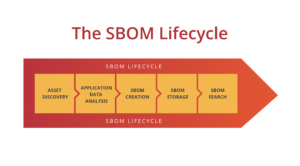

There are five stages in the SBOM lifecycle: Asset discovery, application data analysis, SBOM creation, SBOM storage and SBOM searchability.

Let’s go through each of these stages one at a time.

Asset discovery

Medical device suppliers typically are building multiple software components for their customers:

- Firmware and binaries

- Client applications that run on a customer’s computer

- APIs to send medical data to

- Mobile applications that run on smartphones

The SBOMs you create need to cover all 4 of these potential use cases, but most of the SBOM tools out there do NOT.

Application data analysis

In this stage, the data that is needed to create an SBOM is collected about the target device, its firmware and client applications. Historically, SBOMs got this info from package manifest files, but now, that’s typically not enough. Modern SBOMs are able to incorporate data from cloud providers, third-party services and SaaS solutions in addition to source code.

SBOM creation, attestation & signature

In this stage, the actual SBOM file is created and will include all the relevant application data. Typically, you will want this to happen every time your engineering teams deploy a new version of the application. After all, if you aren’t building a SBOM every time you deploy a new version, is your SBOM going to match the state of your production app?

Finally, and most importantly these SBOMs need to be signed by a third party to attest to their validity.

SBOM storage

SBOMs need to be stored somewhere centrally and protected with a rich authorization layer. This centralized storage can be an S3 bucket or other secure managed file server, but make sure that they’re stored in an encrypted manner! Otherwise, you might run afoul of compliance requirements!

SBOM searchability

Finally, customers need to be able to search across some or all of their SBOMs. Ultimately, all the steps before this one led to enabling this functionality. This searchability is the most important aspect of the SBOM process because this is the central source of truth. This is how you look up vulnerable software in your environment and find which applications to tackle.

Many SBOM tools don’t support medical device SBOMs

Most SBOM tools that exist right now, focus on showing customers some of the open-source libraries that they are using in their applications. Moreover, most SBOMs are stand-alone JSON documents and by themselves don’t provide much value. Even worse, many SBOMs are created once and then never updated so they quickly become out of sync with the actual device and applications they are supposed to describe. Medical device manufacturers need to make sure their SBOMs are up-to-date and accurate.

Automate SBOMs for medical devices and client applications!

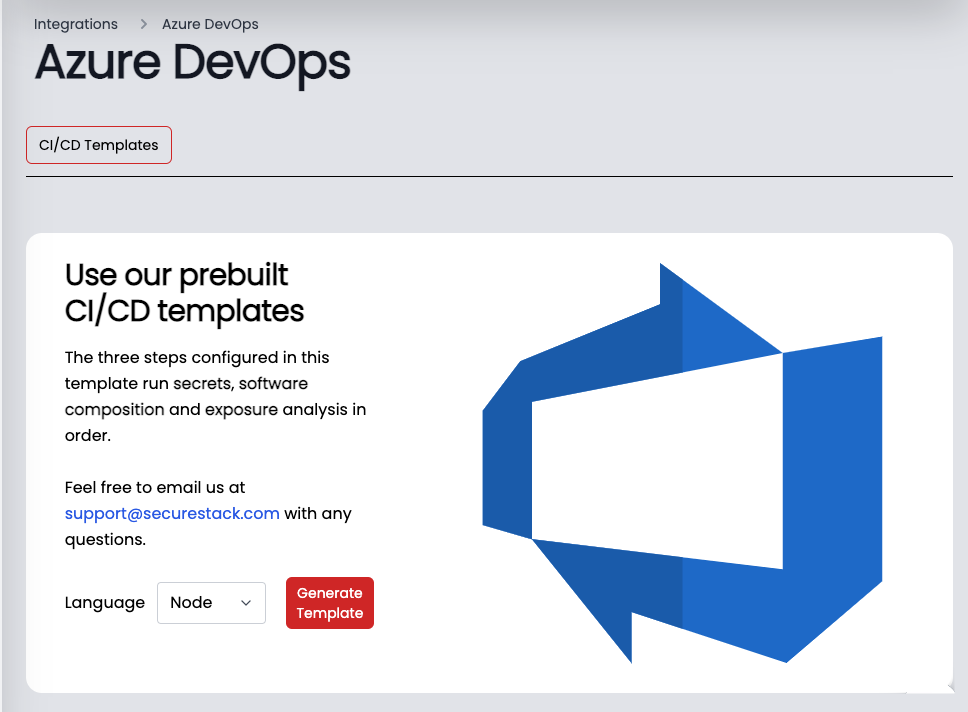

SecureStack automates the whole process of collecting data and building SBOMs from beginning to end. We sign and attest our SBOMs so you can provide our reports and data to the government, partners, auditors, or anyone else you choose to. Our platform delivers all stages of the SBOM lifecycle in a fully integrated solution that is incredibly easy to onboard. We find all your application assets, automate the creation of your SBOMs, store them for you in a secure central repository, and make them searchable.

See how it works!

Paul McCarty

Founder of SecureStack

DevSecOps evangelist, entrepreneur, father of 3 and snowboarder

Forbes Top 20 Cyber Startups to Watch in 2021!

Mentioned in KuppingerCole's Leadership Compass for Software Supply Chain Security!